I am worried about the way ‘data analysis’ discussions are heading – and it’s not because I am old.

My family is unusual in that I have a PhD in econometrics, my daughter has a master’s degree in applied statistics, and my son has a PhD in robotics (aka data science). Our get-togethers are a bit different because we each have distinct, strong views about how to analyse data.

Our discussions are lively, balanced and sensible. I contrast that with a Bloomberg TV interview I watched today with Mark Cuban. I knew nothing of him before but, apparently, he is a multibillionaire, has had frequent conversations with presidents Obama and Trump, and once owned ‘PayPal’. My take on his view is that he is predicting that machine learning and artificial intelligence (AI) – together with other techniques that make up data science – will replace much of human labour with ‘robots’ (I’m stretching it a bit for effect).

Cuban’s comments remind me of watching black-and-white TV in the early ’60s with my brother, mum and dad. One advert talked about “Mrs 1970” (she would ‘arrive’ after the first moon landing but the ad was made when the Beatles were almost unknown outside of Liverpool). Mrs 1970 was to have robots doing everything. Housework was to be out the window for the mums of the future. Nearly 60 years later, I go to a grandsons’ birthday party to find an uncle supervising a lawn-mowing robot because it kept falling off the kerb into the road. We’re almost there!

‘Robo-advice’ is coming in and I think that is great, if it means repetitive steps can be done by computers. It makes advice cheaper for people previously excluded from higher-priced personal advice. At what I think is the other extreme, so-called ‘quant funds’, using sophisticated algorithms (algos) fell apart in the GFC.

The quant-fund algos happened to be written by people who all had similar training and, unbeknownst to the funds and their investors, were doing similar things. They all fell over together when Lehman’s crashed. And that’s where I have problems with the technology. Machines can learn from data but they can’t juggle unspecified scenarios for the future that have no historical data.

When the cost of making a poor decision is small – or easily avoided – let computers rip. But when it matters, I believe we need to stand above machine learning. (I feel a family discussion brewing.)

Recently, my data supplier, Thomson Reuters, invited me to vote on “judgement or predictive modelling” as the best way for fund managers to gain outperformance. The predictive modelling tag sounds too close to machine learning lingo for me to be comfortable. My view always has been – and always will be – measure what is useful and use it, subject to a manual over-ride. You need both.

I recently did an online course in data science from johns Hopkins University, in Baltimore, Maryland, in the US to make sure I was up with the program, and to be able to communicate with my kids. To my dismay, my discipline (statistics/econometrics) was only a tiny bit of the course, by their definition, and their view of stats was about at the standard I and others were at about 50 years ago. What happened to the intervening research? The emphasis in data science seems to be on predictive ability at all costs. No story or reason is necessary, nor, apparently, available. Even statistical significance is out the window! But what is worse, to me, is that there is no diagnostic testing to challenge the assumptions of the model, as there is in econometrics! To me, that means data science is living on a hope and a prayer when facing the problems I try to solve. Of course, there are other applications to which this new discipline seems well suited.

Put simply, my view is more data is not necessarily better. Too much data makes more complicated models necessary – a point the late professor Clive Granger, 2003 Nobel Laureate, was making in the ’60s. Faster computers now allow for even more calculations, but should we necessarily make them?

Is there a happy medium? I think so. For example, my way of predicting long-run volatility, which I have previously written about in PP, for each sector of the ASX 200 does engage in machine learning, in a sense. It compares models each month with others using alternative breaks points in average levels of volatility. It does not react to the first signal unless it is massive, but after a few months, a break can be locked in. I automated the whole process, but I know exactly what my algo is doing at every step. And if a ‘silly’ number comes

up – I would stand on it.

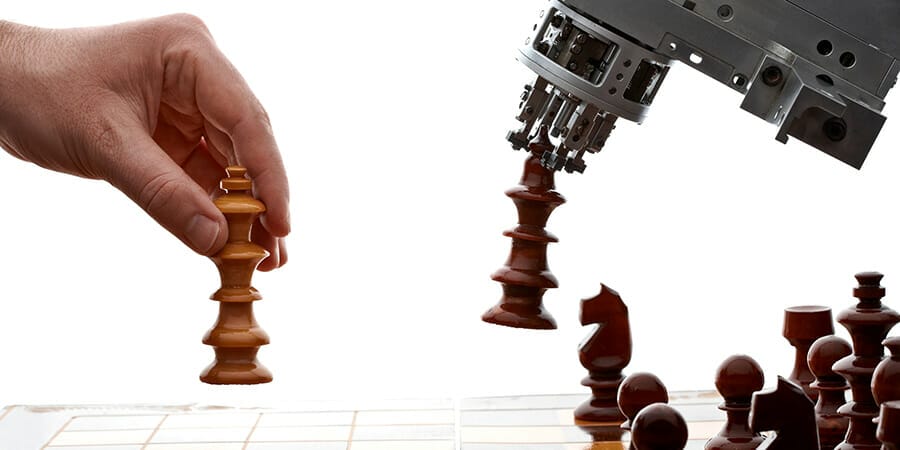

I want my kids (and others) to stun me with new techniques and understandings of data but I will never subscribe to machines doing more than helping humans, unless it’s at playing a game with well-defined rules, like chess. The brain is so much smarter. And I’m up to a competition if there are any takers (including my son) but I won’t walk up Mount Kosciuszko if I lose, like Steve Keen had to after making a ludicrous bet on house price crashes. How about a nice lunch if a machine learner can beat me on my turf?